I care about URLs. I have spent time ensuring the URLs for this site are clean, concise, uniform, readable, and easily sharable. I subscribe to the idea that cool URIs don’t change and that URLs are UI. Even while I recognize that the “appification” of the web has minimized the importance of URLs, and I expect URLs to become increasingly niche as computer interfaces become conversational, the URL remains the defining feature of “the web” as we know it today, and I believe we should design URLs to embolden visitors with confidence.

The web has always been a nebulous concept, but at its center is the idea that everything can be linked.

– Rethinking What We Mean by ‘Mobile Web’, John Gruber

The Long, Slow Death of the URL

Anything that reduces the prevalence and usefulness of cross-site linking is a direct attack on the founding principle of the Web.

In September 2013, iOS 7’s Mobile Safari began hiding all but the domain of visited URLs. Eight months later in April 2014, the Chrome team experimented with burying the URL. The experiment was met with widespread criticism from the development community—Paul Irish called it a “very bad change [which] runs anti-thetical to Chrome’s goals”—and the feature was “backburnered” a month later, and declared “dead” in 2015. Nevertheless, when OS 10.10 was released in October 2014, Apple introduced the “Smart Search” field to Safari 8 which brought the URL hiding features of iOS 7 to the Mac.

Shortly after the advent of installable PWAs in Chrome in 2015, the Chrome team launched Lighthouse, a tool which checks whether a website meets Google’s criteria for PWA compatibility. The tool initially encouraged site owners to configure the site to “allow launching without address bar”—a recommendation which was eventually removed after a lengthy discussion (once again involving Paul Irish) on GitHub and heated discussion around the web.

While that battle seems to have been won, for the time being, the URL fell under attack again when Google released AMP (Accelerated Mobile Pages) in February 2016. AMP content is cached on Google’s servers (to improve performance), and uses a non-standard URL format—once again, this garnered widespread criticism from the development community. In February 2017, Google updated AMP to allow users “to access, copy, and share the canonical URL of an AMP document … [via] an anchor button.” The experience remains suboptimal however, and has been dubbed by John Gruber as begrudging UI. iOS 11 may work around this issue; in iOS 11, when AMP pages are shared via the built-in share sheet, the canonical URL will be shared instead of the AMP URL. According to an AMP Tech Lead, Google “specifically requested Apple (and other browser vendors) to do this … [as] AMP’s policy states that platforms should share the canonical URL of an article whenever technically possible.”

The killer feature of the web—URLs—are being treated as something undesirable because they aren’t part of native apps. That’s not a failure of the web; that’s a failure of native apps.

– Regressive Web Apps, Jeremy Keith

Anyone witnessing this must ask themselves: “Why are we, the development community, so passionately protective over URLs? And why are these large internet companies so seemingly hellbent on devaluing them?”

The answer lies in conversational UI.

Defending the URL

Those who defend the URL do so because URLs are universal. URLs work in every conceivable browser, on every conceivable device, in command line utilities without any UI at all, on billboards and business cards … URLs are extremely portable.

The portability of the URL is part of what has made it such an important social tool. In 2012, the Atlantic estimated that 56.5 percent of their social referrals came from “Dark Social” (i.e. direct URL sharing). At the same time, Chartbeat told the Atlantic that direct URL shares accounted for 69 percent of overall social referrals on sites that Chartbeat managed. More recent reports from RadiumOne suggests Dark Social may now account for as much as 84 percent of social referrals.

The URL and the ability for anyone to mint a new one and then propagate it is what makes the web so resilient, so empowering, and so interesting! That I don’t need to ask anyone permission to create a new website or webpage is a kind of ideological freedom that few generations in history have known!

– The death of the URL, Chris Messina

URLs are powerful tools that work everywhere. Except … when it comes to voice.

I’m sure you hear URLs on the radio. You may have even used them in a conversation, but ultimately anything beyond a domain name becomes incredibly difficult to communicate audibly. Those who look forward to conversational UI will argue that URLs don’t actually work on “every conceivable device” but only in text-based mediums.

Conversational UI

Jakob Nielsen predicted that speech could be the death of the URL in URL as UI in 1999:

Domain names may die … In the long term, it is not appropriate to require unique words to identify every single entity in the world. That’s not how human language works.

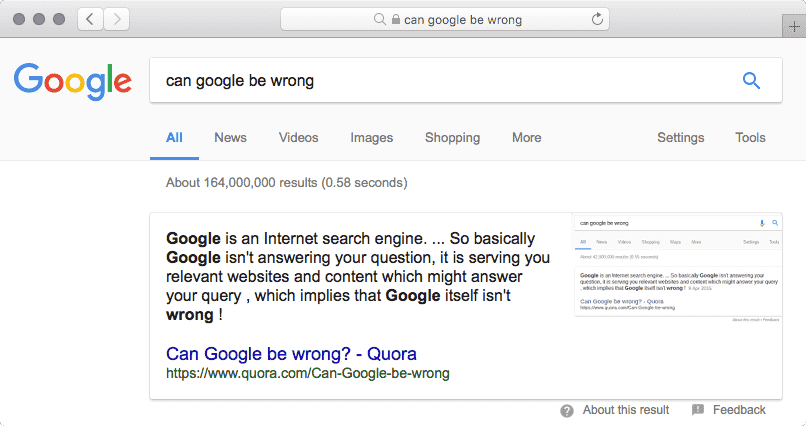

When I look at virtual “personal assistants” like Amazon’s Alexa, Google Assistant, Apple’s Siri, Microsoft’s Cortana, and Samsung’s Viv, it’s easy to see why the URL it starting to be treated like a second class citizen. Conversational UI will become a huge part of human life in the not-so-distant future, and that is the true villain of the URL. The awesome and terrifying power of conversational UI is that data is aggregated, stripped of sources, and regurgitated as fact. Speed is valued over clarity. The reckless devaluation of the URL is the first step towards a future, where queries are spoken and responses can truly be accessed anywhere, anyhow … and (if we are not careful) where fiction becomes fact.

And here's what happens if you ask Google Home "is Obama planning a coup?" pic.twitter.com/MzmZqGOOal

— Rory Cellan-Jones (@ruskin147) March 5, 2017

Our ability to understand who we’re talking to on the web is underpinned by search engines.

Why is Google leading this charge? Because they are the only one’s who can. The cataloging and indexation of data has always been Google’s domain. Now, with forays into AI and neural networking, Google is in a position to turn the web into a closed API for clean, aggregate data.

Isn’t [Google] trying to guarantee the open Web stays alive? Not necessarily. [Google]’s goal is to gather as much rich data as possible, and build AI. Their mission is to have an AI provide timely and personalized information to us, not specifically to have websites provide information. Any GOOG concerted efforts are aligned to the AI mission.

We see examples of this in Google’s “Featured Snippets”, which have been referred to as Google’s “One True Answer”, “Direct Answer” and “Rank #0”. Featured Snippets scrape content from websites and display only the relevant data directly at the top of Google’s search results and power Google’s Assistant. Some argue that this eliminates the need to visit the originating page. While the URL is displayed, the value of the link is diminished, the content has already been delivered, the “knowledge” gained. In September 2017, Google confirmed that AMP links may be displayed in featured snippets of mobile search results, providing searchers even less incentive to visit the originating website. The heart of the matter is can we trust Google?

A big corporation (in [this] case Google) decides what is good for [us] and what [we] can find … and buy and use.

– The death of the URL, Michael Bumann

By removing our ability to navigate, choose, and share freely … [these companies are] exchanging our freedom for a promise that they’ll keep us safe, give us everything we need, and do all the choosing of what’s “good enough” for us …

– The death of the URL, Chris Messina

Well “You can’t blame me”, says the media man

… I just point my camera at what the people want to see

Man it’s a two way mirror and you can’t blame me”– Cookie Jar by Jack Johnson

Fortunately, Google is taking responsibility and actively trying to cleanup Featured Snippets. As a consumer, I admire Google’s initiative. I appreciate getting answers at the touch of a button, at the tip of the tongue, without having to pour over pages of content, links, and advertising just to get at the good bits. It saves me valuable time, and on the web, time is capital. At Google, content is cheap.

URLs are the web. They are critical to assessing credibility in the digital age.

Microsoft Research conducted an eyetracking study of search engine use that found that people spend 24% of their gaze time looking at the URLs in the search results.

We found that searchers are particularly interested in the URL when they are assessing the credibility of a destination. If the URL looks like garbage, people are less likely to click …

– URL as UI, Jakob Nielsen

The war on the URL is far from over. If we want the URL to live on, it is our responsibility, as web practitioners, to design URLs that are easily spoken and easily remembered.

Designing URLs for Humans

Note: This section contains technical details of how to implement URL designs in Apache 2.4 ; be aware of your server type and version, as they may be different.

URLs are prime real estate. Invest in your URLs. Make URLs a priority.

URLs are for humans. Design them for humans.

Any regular semi-technical user of your site should be able to navigate 90% of your app based off memory of the URL structure. In order to achieve this, your URLs will need to be pragmatic.

– URL Design, Kyle Neath

Designing URLs mostly means leaving information out. When designing URLs there are two basic elements to keep in mind: simplicity and consistency. These basic elements can be applied to each of the following rules for URL design. URLs should be:

- short

- easy-to-type

- hierarchical (visualize the site structure)

- malleable (enable navigation by removing segments of the URL)

- persistent

I want people to be able to copy URLs. I want people to be able to hack URLs. I'm not ashamed of my URLs …I'm downright proud.

— Jeremy Keith (@adactio) May 23, 2016

URL Length

The full, indexed URL (including the scheme and host) should be between 75–115 characters in length to allow the full URL to be displayed in SERPs and preserve the readability and shareability of links.

In 2007, a MarketingSherpa study found that shorter URLs were 250% more likely to be clicked in search results when directly compared with longer URLs.

URL Simplicity

Use only lowercase letters, digits and hyphens (i.e. dashes, -) in URLs.

Use short, full, and commonly known words. If a path segment has a dash or special character in it, the word is probably too long.

– URL Design, Kyle Neath

Hyphens vs Underscores

While they are technically permitted as “unreserved characters” in URIs, do not use tildes or underscores (i.e. ~ or _) in URLs, and use periods only when file extensions are required.

Hyphens feel native in the context of URLs as they are allowed in hostnames and domain names, while other characters (such as underscores) are not. Additionally, hyphens are easier to type—no need to use the ⇧ key—and hyphens are not obscured by underlines. Also, Google recommends using hyphens instead of underscores.

While emoji domains are cool (I own a few myself: 🌩.ws and ☄.ws), non-ASCII characters are difficult to type and sometimes difficult to differentiate. If you are targeting an English speaking audience, use only unreserved ASCII characters in URLs.

When using a post or product title (page subject) in the URL, replace spaces and special characters with hyphens. Typically apostrophes and quotes may be removed altogether. Multiple sequential hyphens should be collapsed to a single hyphen.

Lowercase URLs

Browsers and search engines treat each unique URL as if it contains unique content, because it is technically possible, through web server configuration, to serve different content at each distinct URL. If distinct URLs are not appropriately redirected or canonicalized, browser caching, edge caching and search engine results may be impacted. As best practice, redirection should be preferred over canonicalization.

While URLs themselves are case sensitive (to support file systems which are case sensitive [e.g UNIX]), domain names and hostnames make no distinction between upper and lower case. Use only lowercase letters in URLs to standardize their appearance and avoid technical issues.

To ensure that all URLs are appropriately redirected to their lowercase equivalent, include the following RewriteMap in your site’s Apache configuration files (e.g. httpd.conf or vhosts.conf:

# Add map to force lowercase URLs

RewriteMap lowercase int:tolower

Then, in your .htaccess file, add the following redirect:

# Lowercase URLs

# Skip case sensitive files/directories manually

# Skip downloads directory

RewriteCond %{REQUEST_URI} !^/downloads/

# Skip dotfiles

# Important for letsencrypt challenge authentication

RewriteCond %{REQUEST_URI} !/\.

RewriteCond $1 [A-Z]

RewriteRule (.*) ${lowercase:%{REQUEST_URI}} [R=301,L]

Apache’s mod_speling may also be used to resolve this issue by letting the server decide which file to serve.

Remove File Extensions

URLs should be independent of implementation. File extensions generally denote the underlying technology powering a website—information which is irrelevant to end users.

Note: Files which are intended to be downloaded typically should include an extension.

One benefit of using extensionless URLs is that if you decide to migrate from one language to another (e.g. ASP to PHP), the URLs will not need to be changed or redirected (which always results in a slight SEO penalty and dip in organic traffic).

To enable Apache to serve extensionless URLs, add the following RedirectRule to your .htaccess file:

# Support extensionless paths

RewriteCond %{REQUEST_FILENAME} !-f

RewriteCond %{REQUEST_FILENAME} !-d

RewriteCond %{REQUEST_FILENAME} !-l

RewriteRule ^([^\.]+[^/])/*$ $1.html [L]

In this setup, the files will be available both at the extensioned path (with the .html) and without. If you do not canonicalize these files, search engines may flag these distinct URLs as duplicate content.

To resolve this, redirect or canonicalize the URLs, or disable access to the extensioned URL altogether:

# Disable direct access to files with extensions

RewriteCond %{REQUEST_URI} \.(p?html?|php)$ [NC,OR]

# Disable direct access to index files

RewriteCond %{REQUEST_URI} /index$ [NC]

RewriteCond %{ENV:REDIRECT_STATUS} ^$

RewriteRule ^ - [R=404]

Another solution is to enable content negotiation in your web server. Your web server will attempt to serve the correct file type, based on Accept headers provided in the request and naming conventions followed on the server.

Trailing Slash

Adding a trailing slash to URLs is largely a matter of preference; there is no distinct technical or SEO advantage to trailing slashes. Be consistent. Avoid mixing URLs with trailing slashes and non-trailing slashes on your site, and be sure to redirect or canonicalize URLs appropriately.

Trailing slashes present some important technical considerations for relative URLs. For example:

- With Trailing Slash:

resourcerelative to/location/is/location/resource - Without Trailing Slash:

resourcerelative to/locationis/resource

This behavior mirrors UNIX file systems in which:

- Slashes denote directories (as

/is a directory separator) - File names do not require a file extension, but cannot contain slashes

Using a trailing slash may make some content more portable, easier to organize, and could save some characters when linking to content via relative URLs. However, a site/page would need to be architected very carefully to take advantage of these benefits.

The question you must ask yourself is: should “directories” always include a trailing slash, or should path segments only include the slashes between hierarchical ancestors? For example, if we navigate upwards from the URL http://example.com/collection/item, which of the following URLs is cleaner?

- http://example.com/collection

- http://example.com/collection/

Essentially, a trailing slash indicates that a URL is part of a hierarchical data structure, meaning the URL contains children or may contain children in the future. If “directories” should always be labeled as such, with a trailing slash, then URLs should probably include the trailing slash, so that URLs will not need to change as a site expands.

Keep in mind, bare domain names are supposed to include a trailing slash, as according to RFC 2616 §5.1.2, “if [no absolute path] is present in the original URI, it must be given as /”

You can confirm this by curling a bare domain name:

$ curl -sILo /dev/null -w %{url_effective} google.com

http://www.google.com/

However, modern browsers strip the slash from bare domain names.

And yet, they still appear in Google search results…

At the time of writing, Google, Wikipedia, GitHub, Stack Overflow, Twitter and Medium omit the trailing slash, while Facebook, WordPress,reddit and Apple include the trailing slash.

A subjective opinion is that a trailing slash on a URL is like a period at the end of a sentence—it balances the URL and provides closure. On the other hand, omitting the trailing slash is one less character to type.

Because neither solution is objectively superior, I have included Apache rewrites for both solutions below.

To add trailing slashes to URLs:

# Add trailing slashes to URLs

# Ignore files with extensions

RewriteCond %{REQUEST_FILENAME} !-f

RewriteCond %{REQUEST_FILENAME} !-d

RewriteCond %{REQUEST_FILENAME} !-l

RewriteCond %{REQUEST_URI} !(\..*|/)$

RewriteRule .* $0/ [R=301]

To remove trailing slashes from URLs:

# Remove trailing slashes from URLs

RewriteCond %{REQUEST_FILENAME} !-f

RewriteCond %{REQUEST_FILENAME} !-d

RewriteCond %{REQUEST_FILENAME} !-l

RewriteRule ^(.*)/+$ $1 [R=301]

Collapse Multiple Slashes

In most web server implementations, URIs map to an on disk path (this can vary depending upon configuration). Because modern operating systems do not assign any special meaning to sequential path separators (i.e. /), paths which include multiple path separators (e.g. /path/to/file and /path//to///file) map to the same file. Therefore the following URLs (which are valid according to RFC3986 §3.3) map to the same location by default:

- https://example.com/path/to/file

- https://example.com/path//to///file

While the same content may be served at both URLs, browsers will not munge the URL by automatically collapsing multiple slashes. This is because it is possible, through web server configuration, to serve different content at both URLs. Browsers and search engines must account for these potential differences and treat each unique URL as unique content unless they are explicitly told not to do so. Because of this, multiple slashes in URLs may affect browser caching and edge caching as well as search engine results if the URL is not appropriately redirected or labeled with a relevant canonical URL.

Multiple slashes also present issues with relative URLs. In relative URLs, “dot-segments” are used to traverse “the URI path hierarchy” (e.g. the .. path segment moves up one “level” in the path hierarchy—equivalent to “parent directory” in the filesystem). If the base element is not correctly defined on a page, multiple path separators will affect the ability to properly navigate the path hierarchy with relative URLs.

As such, it is best practice to collapse multiple slashes in URLs.

In Apache, multiple slashes may be collapsed with the following RewriteRule:

# Collapse multiple slashes in URLs

RewriteCond %{THE_REQUEST} //

RewriteRule .* /$0 [R=301,NE]

Stripping URL Parameters (Query String)

Many third party services (like email providers, social sites and affiliates) add query parameters onto URLs shared through their platform or service, so their service will be identified in your site’s analytics, and (assuming you’ve installed the corresponding JavaScript “pixel”) so they can track your traffic themselves.

While this benefits site owners and affiliates, these URLs sacrifice user experience. These additional parameters lengthen the URL, making it difficult to read and unpleasant to share. These parameters may also be inadvertently shared when visitors copy and paste URLs, which can skew analytics data.

Even if you do not directly advertise your site through such services, because of the nature of the web, anyone can link to your site through one of these services and these parameters will be displayed to your visitors.

Help users understand the content of the current view by removing unnecessary query parameters from URLs.

One method to scrub query strings from URLs is to use your web server to redirect to the same URL without the query string:

# Remove query string from the URL

# Note: In Apache 2.4 or later, the "QSD" option (qsdiscard)

# can be used to remove the query string opposed to

# appending a "?" the the substitution string

RewriteCond %{QUERY_STRING} .

RewriteRule (.*) /$1? [R=301,L]

The disadvantage of redirecting at the server level is that once the page has been redirected, the query string is no longer available to JavaScript based analytics tools (like Google Analytics). Ideally, you would clean the URL and track the data correctly in analytics tools.

A better solution is to strip the query string from the URL once the page has loaded and a pageview has been tracked. This is possible using JavaScript’s history API.

// Shareable URLs

// Strip query parameters from the URL

(function(l){window.history.replaceState({},'',l.pathname+l.hash)})(location)

This removes the entire query string. Sometimes some URL parameters will be required for rendering a unique view; in that case, you will need a solution that allows these necessary query parameters to be whitelisted. This can be accomplished with a more advanced JavaScript solution like Fresh URL.

Redirect to secure www

Use https for the SEO benefits; it’s just so easy that there is really no excuse.

In 2015, Chrome 46 introduced changes to mixed content warnings in the address bar to “encourage site operators to switch to HTTPS sooner rather than later”, also announcing a plan to eventually label all HTTP pages as non-secure. In 2016, Chrome 56 began labeling HTTP pages with password and credit card fields as “not secure” and reiterated the intent to move toward two states, secure and not secure, and has been marching steadily towards that goal. Google recommends using “HTTPS everywhere” as a long term solution. In 2018, with the release of Chrome 68, Chrome began marking all HTTP sites as “not secure”.

Additionally, http2 (which introduces performance benefits) is currently supported only over HTTPS and “powerful features” of HTML5 require HTTPS. These features include:

- Geolocation

- Video and Microphone

- Device motion / orientation

- Fullscreen

- Pointer locking

- Service Workers

- AppCache

- Notifications

- Web Bluetooth

Not using HTTPS can also impact your site’s analytics data. When a visitor arrives on an HTTP site from an HTTPS referrer via a link, the referrer is stripped; this traffic is labeled “dark” traffic (i.e. visits for which the origin cannot be determined) which Google Analytics records as “direct” acquisition. In contrast, traveling from an HTTP or HTTPS site to an HTTPS site will properly attribute the referral data in Google Analytics. While it is possible for external site owners to work around this by establishing a referrer policy, may sites do not.

HTTPS also prevents “man in the middle” attacks and third party content injection by malicious agents and internet service providers (ISPs) which can insert advertisements, notices, tracking code and malware and hijack traffic. The threat is real. Accordingly, HTTPS is required for government agencies.

Most domains should use www, because www prepares a site to handle the challenges of a multi-server architecture with multiple subdomains.

At the time of writing, Google, Wikipedia, Facebook, reddit and Apple redirect to www, while GitHub, Stack Overflow, Twitter, Medium and WordPress redirect to non-www.

Below I have combined the https and www redirects to reduce the number of redirects. Redirects slow page load time and leak SEO value—eliminate unnecessary redirects whenever possible.

# Redirect insecure, www traffic to secure, www

RewriteCond %{HTTPS} !on [OR]

RewriteCond %{HTTP_HOST} !^www\. [NC]

RewriteCond %{HTTP_HOST} ^(?:www\.)?(.*) [NC]

RewriteRule (.*) https://www.%1/$1 [R=301,L]

If you really don’t care about the benefits of www:

# Redirect insecure, www traffic to secure, no-www

RewriteCond %{HTTPS} !on [OR]

RewriteCond %{HTTP_HOST} ^www\. [NC]

RewriteCond %{HTTP_HOST} ^(?:www\.)?(.*) [NC]

RewriteRule (.*) https://%1/$1 [R=301,L]

URL Hierarchy

Hierarchical URLs make it easier for users to navigate and search a site via “virtual breadcrumbs”, enable site owners to gather more detailed analytics data, enable easier URL migrations via redirection, and may enable more efficient crawling from search engines.

Namespaced URLs

One way to easily add hierarchy to URLs is through namespacing. Namespacing allows you to create new “top level sections” by organizing content into specific verticals. For example, rather than having blog posts live at the same level as your main content, namespace all blog content to /blog/. This keeps blog URLs isolated from the rest of your content. It’s also easy for users to remember this convention with continued usage.

Github does a great job of namespacing their URLs. For example:

- https://github.com/joeyhoer

- Every path beyond this URL belongs to me

- https://github.com/joeyhoer/starter

- Every path beyond this URL belongs to my project

starter - https://github.com/joeyhoer/starter/issues

- Every path beyond this URL references an issue on my project

starter

The simplicity and consistency in Github’s URL design has allowed them to scale to millions of users with millions of repositories.

URL Malleability

URLs should be malleable (“hackable”)—by enabling users to navigate down the URL tree (to the left) by removing segments from the URL—and easily guessable.

Query Strings for Facets

Pages should always work without query strings attached.

I like to think of querystrings as the knobs of URLs — something to tweak your current view and fine tune it to your liking.

– URL Design, Kyle Neath

I’ve written at length about why query parameters should be used for facets and sorts. Query parameters are great for URL malleability, because even if a user attempts to hack a URL and “gets it wrong”, they’ll still be on a page with relevant content.

URL Persistence

Any URL that has ever been exposed to the Internet should live forever: never let any URL die … Remember, people follow links because they want something on your site: the best possible introduction and more valuable than any advertising for attracting new customers.

– Fighting Linkrot, Jakob Nielsen

Once a URL has been minted, you have made a commitment to serve relevant content at that location for as long as possible.

It is the duty of a Webmaster to allocate URIs which you will be able to stand by in 2 years, in 20 years, in 200 years. This needs thought, and organization, and commitment.

– Cool URIs don’t change, Tim Berners-Lee

Once you’ve launched a new URL, links to that location are no longer under your control. You never know where links to your URLs may exist.

A URL is an agreement to serve something from a predictable location for as long as possible. Once your first visitor hits a URL you’ve implicitly entered into an agreement that if they bookmark the page or hit refresh, they’ll see the same thing.

Don’t change your URLs after they’ve been publicly launched. If you absolutely must change your URLs, add redirects …

– URL Design, Kyle Neath

When content is not maintained (i.e. when content is removed/archived) during an upgrade and URLs are not appropriately redirected, links from various locations die. Linkrot refers to the tendency for links to increasingly point to resources that have become permanently unavailable over time. The average life span of a web page is 9.3 years.

Linkrot equals lost business: make sure all URLs live forever and continue to point to relevant pages.

– URL as UI, Jakob Nielsen

URLs that no longer return relevant content erode the credibility of your site and all sites linking to that data.

When someone follows a link and it breaks, they generally lose confidence in the owner of the server. They also are frustrated - emotionally and practically from accomplishing their goal.

Enough people complain all the time about dangling links that I hope the damage is obvious. I hope it also obvious that the reputation damage is to the maintainer of the server whose document vanished.

– Cool URIs don’t change, Tim Berners-Lee

By the way, changing URLs can also have an impact on social sharing.

Naming URLs

URLs change when information changes. If information is subject to change, it has no place in the URL. The first step in selecting a naming convention for URLs is identifying common attributes, unique to each piece of content, which will not change over time.

Tip: For ecommerce websites, SKUs are typically the best example of a unique identifier that should not change.

Using the date which the content is published is a good place to start:

The creation date of the document—the date the URI is created—is one thing which will not change. … That is one thing with which it is good to start a URI. If a document is in any way dated, even though it will be of interest for generations, then the date is a good starter.

– Cool URIs don’t change, Tim Berners-Lee

Using the date as the sole indicator for the URL presents a number of challenges. Namely, the date does not convey any relevant information about the content of the linked page—information important for search engines and users making decisions about the quality of a link. Dates also do not scale well for sites which may have multiple pieces of content being published on the same day, the same hour, the same minute, and sometimes even in the same instant. Additionally, users are quick to judge content relevancy based on the date posted and have a prejudice against outdated content. “Information architects” (typically UX designers) avoid dating URLs because they want content to appear evergreen (always relevant); instead they choose to use the post title/subject as the URL.

Using the post title in the URL presents it’s own issues:

leave out … [the] subject. … It always looks good at the time but changes surprisingly fast. … This is a tempting way of organizing a web site—and indeed a tempting way of organizing anything … but has serious drawbacks in the long term. … When you use a topic name in a URI you are binding yourself to some classification. You may in the future prefer a different one. Then, the URI will be liable to break.

– Cool URIs don’t change, Tim Berners-Lee

As an example, imagine you’ve written a piece of evergreen content titled “Google’s Various Entreprises” and you discover that you’ve misspelled “Enterprises”, and then Google changes its name to Alphabet, and perhaps you decide later that “Various Ventures” has a nice sound and would be better suited to SEO. What happens to the URL then? Either the URL must change (and be redirected) or your content’s address is permanently mislabeled.

Medium and Stack Overflow have both come up with interesting solutions to this issue. Both companies assign an ID to each post and include the ID in the URL along with the current post title. The important thing to note is that in these romanticized URLs the title in the URL is entirely optional. For example:

- Stack Overflow Question ID

10712555 - Medium Post ID

adbec59c7cf4 -

- Minimal URL: https://medium.com/@torgo/adbec59c7cf4

- Augmented URL: https://medium.com/@torgo/another-title-adbec59c7cf4

- Full URL: https://medium.com/@torgo/in-defense-of-the-url-adbec59c7cf4

As long as the ID appears in the correct location in the URL, the URL redirects to the correct content. This solution works because it creates persistent URLs that are flexible enough to support romanticized SEO titles which may change over time while enabling scalability.

reddit also takes as similar approach, while YouTube uses an 11 character base64 encoded ID for each uploaded video. This technique allows these companies to easily scale into the foreseeable future.

Migrating URLs via Redirection

A solid URL migration strategy is a critical element of any site migration. Do not neglect to redirect URLs appropriately when changing technology and migrating content.

One-Time Use URLs

When a user completes and action, they are often presented with a success page/message. Ideally, every user-targeted view on a site would have a unique URL that can be saved. Typically, refreshing a “success page” will return the user to a previous state in the conversion process, meaning success pages cannot be saved or shared. One time use URLs should be avoided. Allow users to refresh the success page to retrieve their order number, subscription information, etc. Better yet, add useful information to that page, just in case the user needs to change something.

In the past, the development community loved to create URLs which could never be re-used. I like to call them POST-specific URLs—they’re the URLs you see in your address bar after you submit a form, but when you try to copy & pasting the URL into a new tab you get an error.

There’s no excuse for these URLs at all. Post-specific URLs are for redirects and APIs—not end-users.

– URL Design, Kyle Neath